Project Rookery

Music, sound design, audio design & implementation June 7, 2020

Project Rookery is a short demo for a story-based walking simulator. It is set in Victorian times in London with a focus on the slums and how people had to live there.

Contrary to glorifying this period and place in time, the game depicts the extreme everyday horrors of Victorian London. The audio plays an important part in making the world come to life and supporting the story and setting. Aspects such as the feeling of guilt over your lost child and the widely spread deceases are audible throughout the game.

Adaptive audio design

The audio took on a number of important roles in this project. For example, the audio plays a big part in conveying information about the character to the player. Information about the main character is obtained through sounds of the character walking around and how she responds to what is happening around her. Through her coughing you hear that she is sick and from her breathing you can hear the fear and unrest she is experiencing. The world is also filled with audio coming from different objects and places around the player. You can hear the sounds of sick children or laughing rich women coming from houses near you. Furthermore, the musical arc communicates the course of the story.

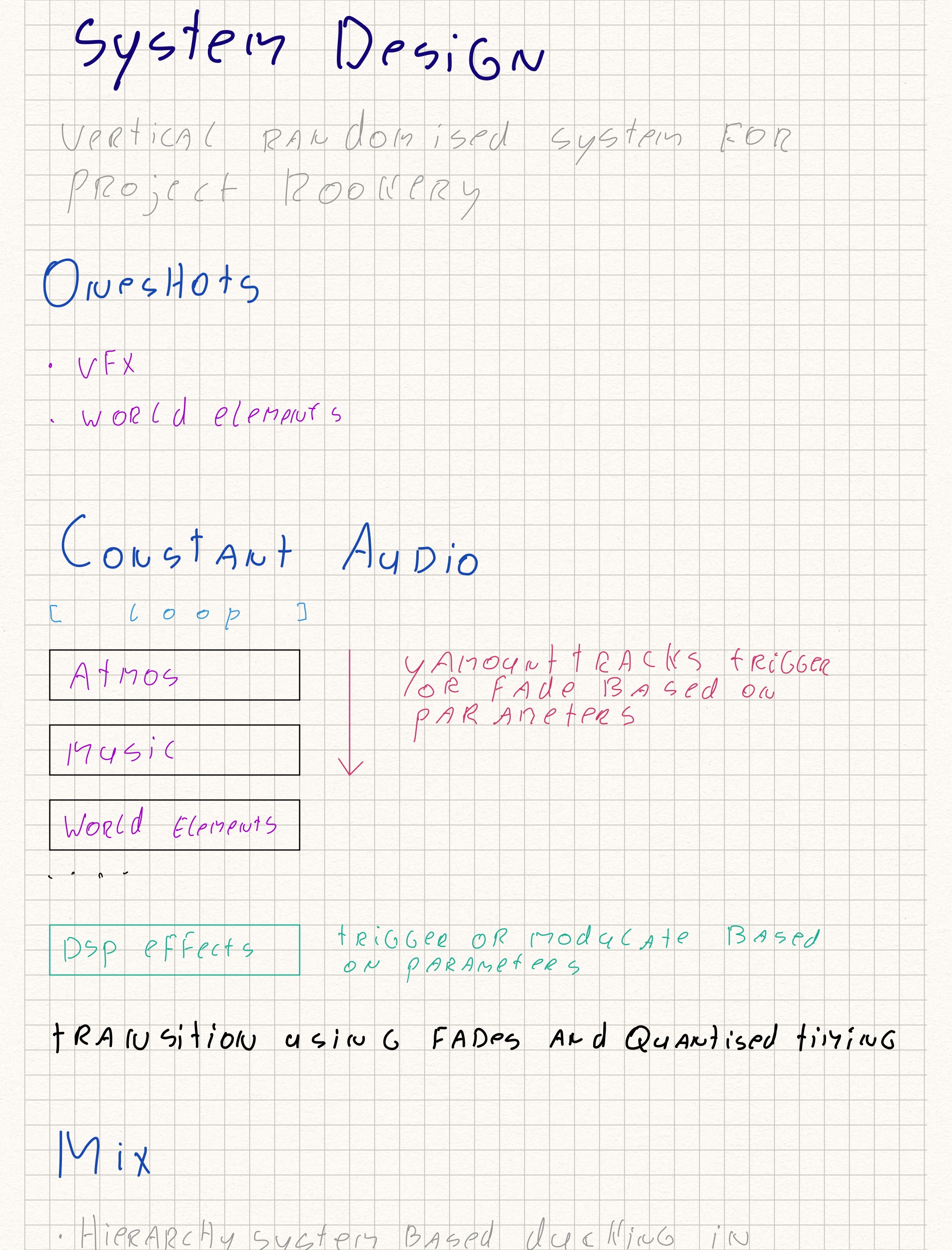

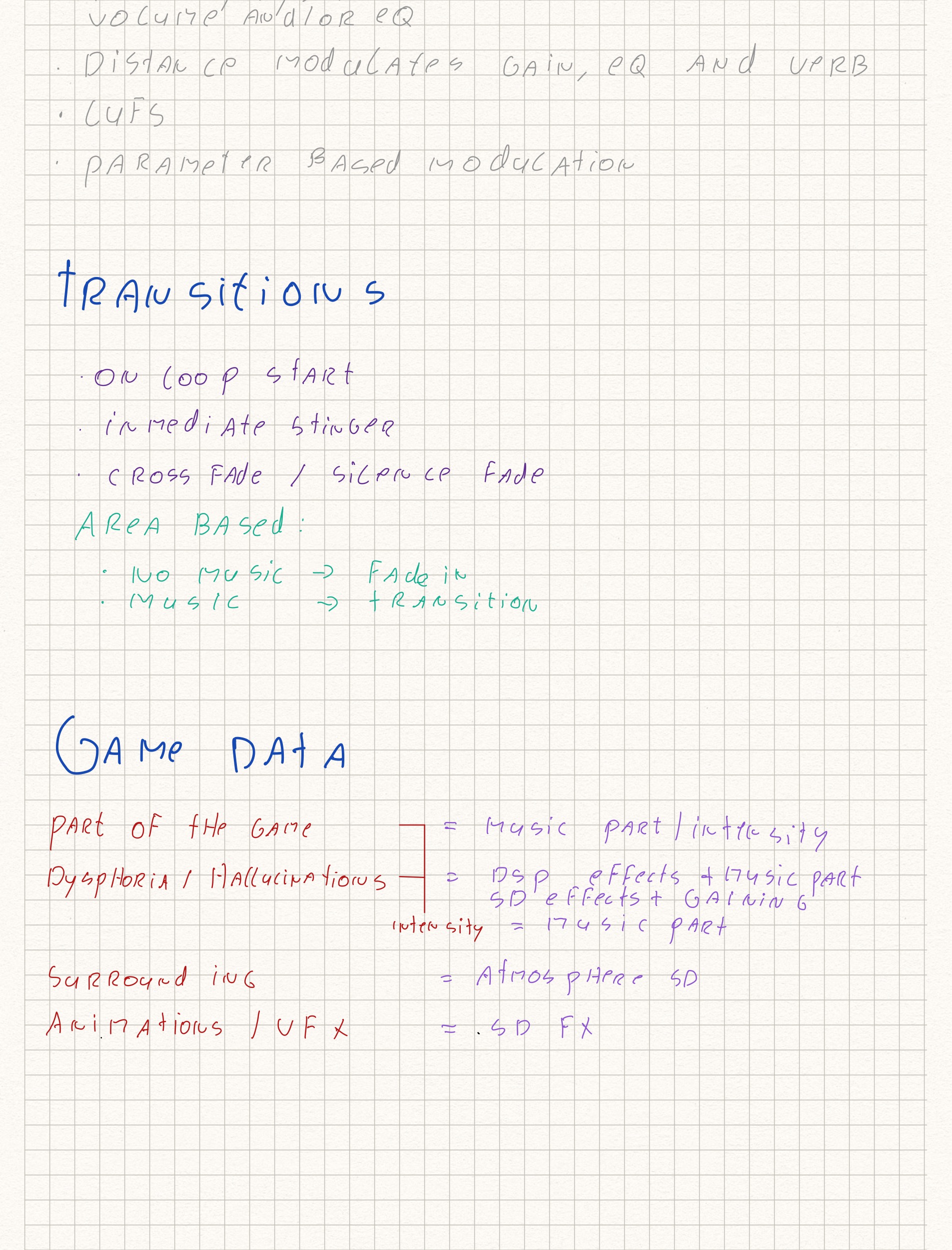

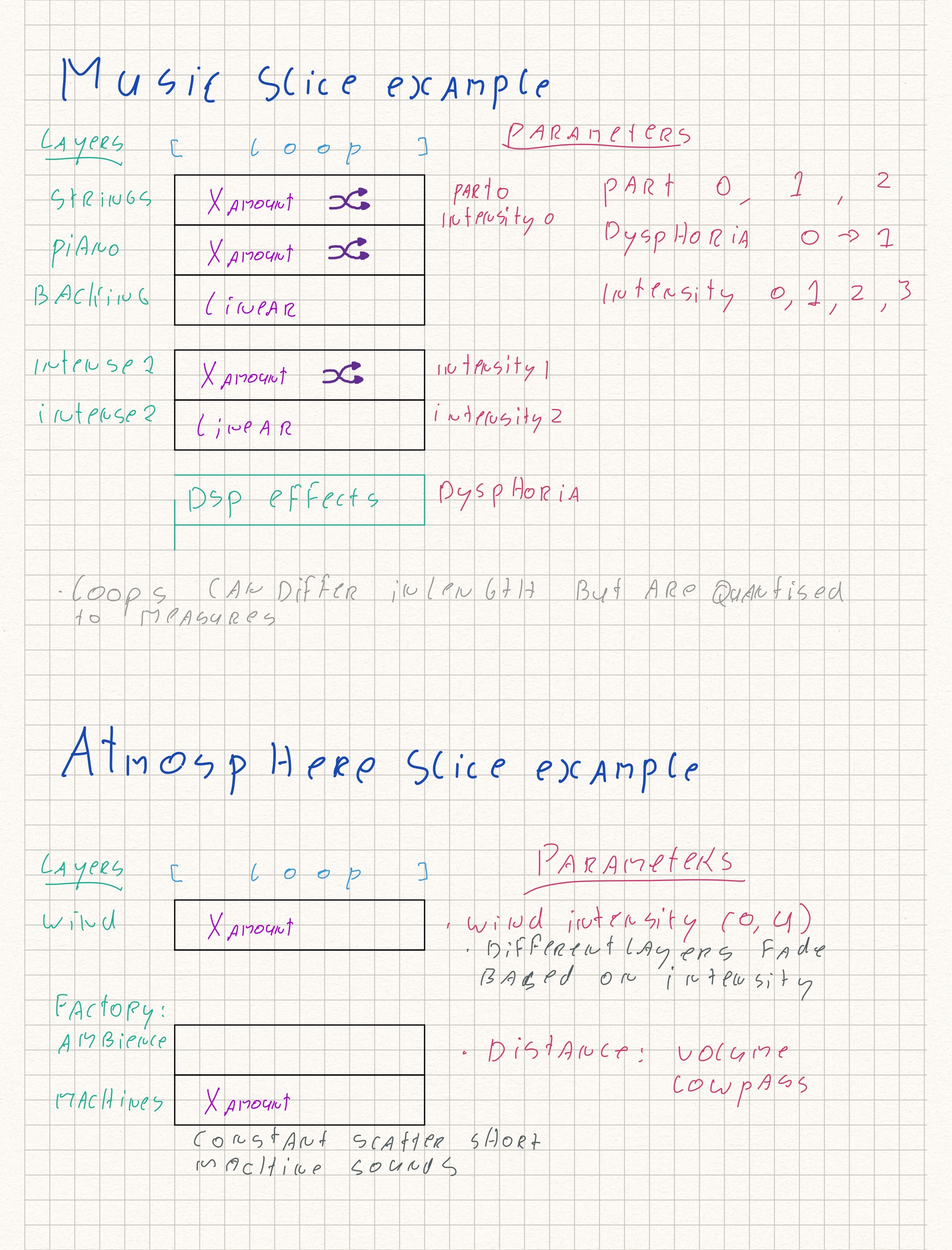

The sketches below illustrate the nonlinear audio design. First, the sound consists of "constant" sounds, such as the atmosphere and music, that adjust based on parameters. Secondly, the audio consists of sounds generated in the environment, such as the sounds of characters (animals, monsters, etc) or buildings. Music can transition through (cross) fading or quantised timing.

Odd-sounding DSP effects are modulated over the audio, using an LFO that increases in intensity as the main character experiences more delirium. With a priority system and FMOD's snapshot functionality, a simple hierarchy has been created. Sounds such as certain hallucinations or world objects lower the amplitude or frequency range of other sounds lower in the hierarchy. Most of the data that audio can respond to is based on the player's position in the world, along with sounds emitted by objects and creatures in the world.

Because the game has a realistic visual art style and a setting based on real life, I decided to mainly use non-synthetic instruments. For the delirium elements I used some synthesis, but mainly processed the acoustic track. This communicates to the player that when strange things start happening to the music, you're hallucinating. Virtual orchestration is combined with recorded instruments to achieve a realistic sound on a small budget. Special thanks to Amber Veerman for performing and recording the vocals.

Project Rookery has been made in collaboration with: Celine de Wijs: VFX. Joëlla van Dijk: Environment art. Sergi van Ravenswaay: networkprogramming.